1. Upload the Video Clip You Want to extend

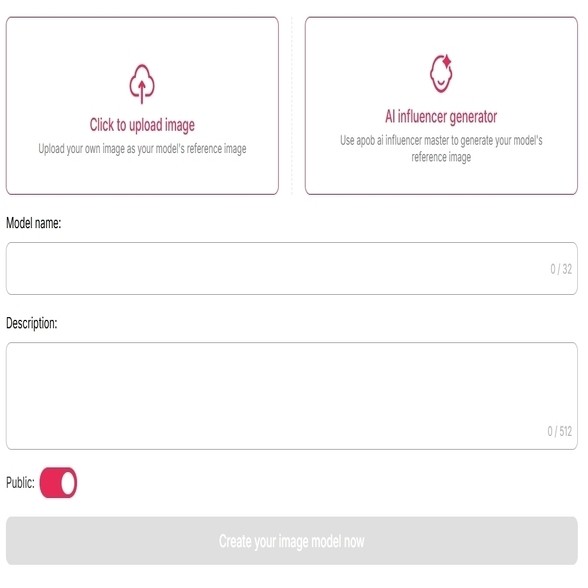

(Note: Please follow our app guide to create an ai portrait, could be the main character of your video. If there is no character in the video, create a random influencer to get started)

Start with a short video that already has a strong subject, mood, or action. It can be a beauty shot, product clip, AI influencer video, fashion reel, travel clip, or cinematic scene.

For example, imagine you created a 4-second clip of a model turning toward the camera, but the motion ends before she fully reveals the outfit. Instead of regenerating the whole video, navigate to the "Reference video" panel and click Upload video. Once your 3-second clip is loaded, go to the bottom right and look at the Extend duration options (4, 5, 10, or 15). Select 4. The AI analyzes the motion vectors of your original clip and prepares to generate the exact length you need to match your audio perfectly, solving your timeline anxiety instantly.

2. Add or Generate a Last Frame for Better Continuity

If the video ends too suddenly, the extended part may feel disconnected. A strong last frame helps the AI understand where the next motion should begin.

For example, if your original clip shows a woman walking into a luxury hotel lobby, you may want the extended video to continue as she pauses, looks around, and smiles. By using the last frame as a visual anchor, you give the AI a clearer direction for posture, lighting, clothing, and background consistency.

In APOB AI’s Extend Video interface, before hitting generate, use the crucial Generate last frame from source video button located on the right side of the interface. This extracts the final frame of your clip and places it into the Add last frame box.

Example instruction:

Prompt: Continue from the final frame. Keep the same woman, same outfit, same lighting, and same camera angle. She slowly turns her head toward the camera and gives a subtle confident smile.

3. Describe What Happens Next (How to describe to make videos longer)

The description box (which allows up to 2000 characters) is where you direct the next part of the scene. Don’t just write “make it longer.” Tell the AI what motion, emotion, camera movement, and ending you want. For example, if you are making a perfume ad, your original clip may show the bottle on a table. The problem is that the video ends before the product feels premium. You can describe the next action like this:

Video Prompt Example:

The camera slowly pushes in toward the perfume bottle. Soft golden light reflects on the glass. A gentle mist moves in the background. The bottle remains sharp and elegant, with a luxury commercial feeling.

This gives the AI a clear visual path: camera movement, lighting, subject stability, background motion, and mood.

Video Prompt elements to include:

Main subject、Next action、Camera movement、Lighting、Mood、Ending shot、What must stay unchanged

4. Choose Duration, Quality, Resolution, and Audio

Use the duration setting based on your goal. A 4–5 second extension is usually best for small movements, social hooks, product reveals, and loopable endings. A 10–15 second extension works better when you need a longer moment, such as a walking shot, cinematic transition, outfit showcase, or story continuation.

For quality, start with Fast to Ultra S and if the clip has a human face, detailed clothing, product packaging, or cinematic lighting. FHD is a good choice when you want a clean final result for social media or commercial preview.

If your video needs atmosphere, such as street ambience, studio sound, wind, footsteps, or a soft room tone, use native audio when available. APOB AI’s image-to-video page also describes native audio as a way to create context-aware soundscapes and voiceovers alongside video rendering.

5. Generate, Review, and Extend Again if Needed

After generation, watch the transition point carefully. Check the hands, face, hair, product shape, camera motion, and background details. If the extension is almost right but not perfect, adjust the prompt instead of starting from scratch.

For example, if a fashion clip has too much motion blur, change the prompt from:

'She turns quickly and walks away.'

to:

'She turns slowly and gracefully, with minimal motion blur. Keep her face and outfit consistent. The camera remains stable.'

For AI video generation, small prompt changes often make a big difference.

Most AI tools cause objects to melt over time. Our advanced neural architecture and noise reuse strategies drastically reduce temporal drift, keeping your scenes structurally sound.

Unlike tools that return silent extended clips, APOB AI learns the rhythm and tone of your original video, ensuring ambient sounds and pacing flow naturally into the extended footage.

From 720P for quick social media drafts to Full HD (FHD) with "Ultra S" quality rendering, you control the final cinematic output and credit budget.

Social Media Reels, Shorts, and TikTok Videos

Sometimes a short clip has a strong hook but ends before the story lands. An AI video extender can add a reaction, closing gesture, extra camera movement, or CTA moment.

This use case matters because video remains central to marketing: Wyzowl’s 2026 data says 91% of businesses use video as a marketing tool, and 93% of video marketers see video as an important part of their overall strategy. (Wyzowl) YouTube also allows square or vertical Shorts up to three minutes for eligible uploads, giving creators more room to tell longer short-form stories. (Google Help)

Product Demos and E-commerce Videos

Product videos often need a few extra seconds to show texture, packaging, scale, or how the item is used. An AI video expander can extend a product reveal without setting up another shoot.

Short-form vertical video also influences shopping behavior. In a Media.net survey reported by TV Technology, 34% of consumers said they want product or shopping content in vertical video, and 77% said product reviews are important in purchase decisions. (TV Tech)

Tutorials, How-To Videos, and Educational Clips

Tutorial videos often need just a little more time to show the final step. Instead of stretching footage or adding still frames, AI can extend the movement itself.

In the same Media.net survey, 44% of consumers said they wanted more lifestyle or how-to videos in short-form vertical formats. (TV Tech)

Landing Page and Ad Creative

On a landing page, a video often needs a smoother loop or a longer product moment. If the clip cuts abruptly, the page feels less polished. AI video extension can create a more natural loop, a cleaner ending, or a stronger brand moment.

The AI video generator market itself is growing quickly. Grand View Research estimated the global AI video generator market at USD 788.5 million in 2025 and projected it to reach USD 3.44 billion by 2033. (Grand View Research)

Cinematic Storytelling and Mini Scenes

For filmmakers, AI video extension can help bridge shots, create atmospheric pauses, or continue a character’s movement. It is especially useful for mood shots: a person walking into fog, a car driving down a neon street, a bride turning in a wedding dress, or a character entering a room.

Short-form vertical video is no longer limited to social platforms. A Media.net survey found that 90% of over 1,000 U.S. adults were open to seeing short-form video content on publisher sites, and 61% said short-form vertical video is more engaging than articles, podcasts, or long-form video. (TV Tech)

No Credit Card Required

1. Save Time Without Reshooting

An AI video expander helps you continue a clip without setting up the same scene again. This is useful when the original shot is hard to reproduce, such as a sunset, a specific outfit, a product setup, or an AI-generated character scene.

2. Turn One Clip into Multiple Assets

A short clip can become a social hook, a longer ad, a loopable background video, or a landing page hero. For creators and marketers, this makes content iteration faster and more flexible.

3. Better Storytelling Control

A clip that ends too early can feel unfinished. AI extension lets you add the missing beat: a reaction, a smile, a product close-up, a camera push-in, or a final moment that makes the scene feel complete.

4. Useful for AI-Generated Videos

AI videos often come out short. An extender helps creators build longer scenes from strong short generations instead of regenerating everything from the beginning.

5. Lower Production Cost

For simple extensions, AI can reduce the need for actors, sets, cameras, reshoots, and manual VFX work. That makes it useful for small teams, solo creators, and fast-moving marketing workflows.

1. It Does Not Replace Editing Judgment

AI can generate new frames, but you still need a creative eye. For professional results, review pacing, continuity, color, sound, and the final cut before publishing.

2. Copyright and Consent Still Matter

If you extend a video containing real people, branded products, copyrighted characters, or third-party footage, make sure you have the right to use and modify it. AI does not remove the need for proper permissions.

What is an AI video extender?

An AI video extender helps making a video longer by continuing the action, motion, or scene from the original clip. Instead of simply slowing down footage or repeating frames, it generates new video content that follows the visual direction of the source video.

How can I make a video longer without making it look slow?

Prompt the AI to continue the camera push-in, add subtle reflections, or show the product from a slightly different angle. This makes the longer version feel intentional rather than dragged out.

What is the best prompt for extending a video?

A good prompt should include three things: what happens next, what must stay unchanged, and the desired camera style.

Example:

Continue this video naturally. Keep the same person, same outfit, same lighting, and same background. The subject slowly turns toward the camera and smiles softly. Smooth camera movement, realistic motion, no sudden cuts.

Why does my extended video change the face or outfit?

Because your motion is too complex or the prompt is too vague, or the extension is too long. Use a shorter duration, add a last frame if possible, and include instructions like: Keep the same face, same hairstyle, same outfit, same body proportions, and same lighting.

How long should I extend a video?

For realistic results, start small. Use 4–5 seconds for facial expressions, product shots, fashion turns, and loopable endings. Use 10–15 seconds for walking shots, scene transitions, or cinematic atmosphere.

How do I make an AI-extended video look cinematic?

Use camera language in your prompt. Words like “slow dolly-in,” “gentle handheld movement,” “smooth orbit,” “shallow depth of field,” “golden hour lighting,” and “soft background motion” give the AI a clearer cinematic direction.

What should I do if the extended video looks blurry?

Try a higher quality setting, reduce the extension duration, avoid fast action, and make your prompt more specific. For human subjects, ask for “slow natural movement” instead of “fast action.” For products, ask the AI to “keep the label sharp and unchanged.”

Can I extend an AI-generated video multiple times?

Yes, but each round can introduce small changes. For best results, extend the strongest version, keep each extension short, and review continuity before extending again. If the character or object begins to drift, go back to the previous cleaner version.

I am noticing a "jump" or flicker exactly where the original video ends and the extended part begins. How do I fix this?

This is known as a boundary discontinuity. To fix this in APOB AI, make absolutely sure you are utilizing the "Generate last frame from source video" feature. This forces the diffusion model to use the exact pixel and lighting data of your final frame as the anchor for the new generation, smoothing out the transition.

Why do objects in my video start to melt or warp when I extend the video to 15 seconds?

This is called "temporal drift". Generative models sometimes struggle to remember the structural identity of an object over long periods. To combat this, use rigid, descriptive prompts (e.g., "static camera," "solid structural shape") and try extending the video in shorter, iterative bursts rather than generating a massive 15-second block all at once.

Will the AI extender match the frame rate (FPS) of my original 60fps cinematic footage?

Most current AI video generation models default to standard cinematic frame rates like 24fps or 30fps. If you upload 60fps footage, you might experience slight jittering in the extended portion. We recommend conforming your timeline to 30fps, or using post-production AI frame interpolation tools to smooth the final output.

Can I use this to extend scenes from famous movies for my YouTube video essays?

As a professional creator, you must be extremely careful. While the technology can technically extend any video, using copyrighted IP without permission to train or generate content can lead to strikes and severe legal issues. Always ensure you own the rights to the base footage you are extending.