1. Seamless Semantic Bonding of Audio and Vision

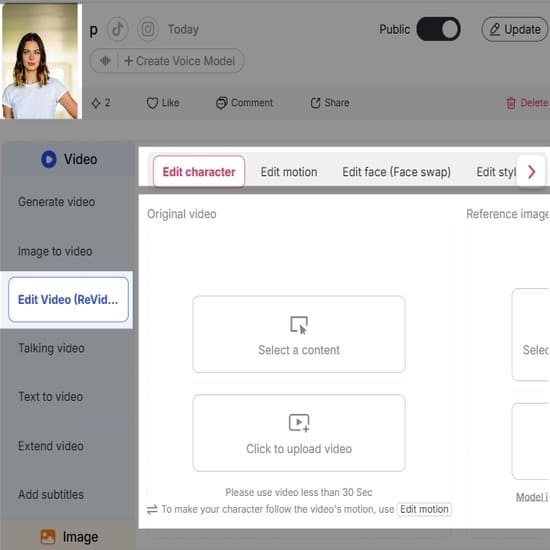

Traditionally, this process is often disjointed, with different tools used to handle lip synching, sound effects, and ambient mix creation. However, this all changes with Kling 2.6, where "semantically bonded" audio and video are created simultaneously. Whether a rap performance is energetic and fast-paced or a relaxed ASMR clip is calm and soothing, the audio beat is perfectly synchronized with the action on screen. With the use of APOB AI, creators can tap into this native audio feature combined with localized AI voices. The "mismatched" look of AI videos is eliminated, allowing social media managers to produce a dubbed and synced export within minutes.

2. The "Physics King": Unparalleled Anatomical Precision

Kling 2.6 has been awarded the title of "Physics King" thanks to its enhanced physics engine, specifically designed to tackle "high risk" problems such as a distorted hand, unnatural face rotation, and physics issues related to clothing, which plagued earlier versions such as Kling 2.1, including the weight of a character's gait and the swaying of the hair. If you're looking for these kind of 'cinematic' effects but don't want the technical hassle of complex rigging, the "APOB AI Kling 2.6 Guide" walks you through how to achieve this kind of anatomical correctness with simple motion references. APOB AI makes the 'Physics King' process easy for freelancers.

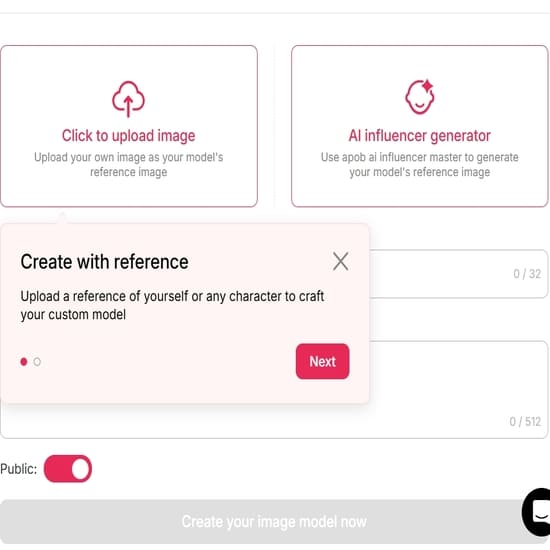

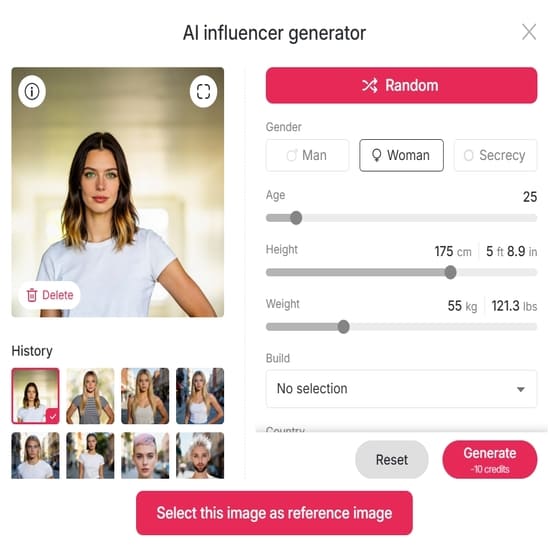

3. Identity Stability through "Elements" Multimodal Memory

Consistency, of course, is everything when it comes to commercial production. In Kling 2.6, there are new elements of a multimodal memory system called "Elements," in which you can upload as many as four images to "lock" your brand spokesperson into every frame of every shot. Building these "libraries of identity" is made very intuitive with APOB AI. If you host your character assets with us, you can achieve continuity of look in an entire marketing campaign. This is what it takes for any brand looking to scale their video output without looking amateur or sloppy in presentation.

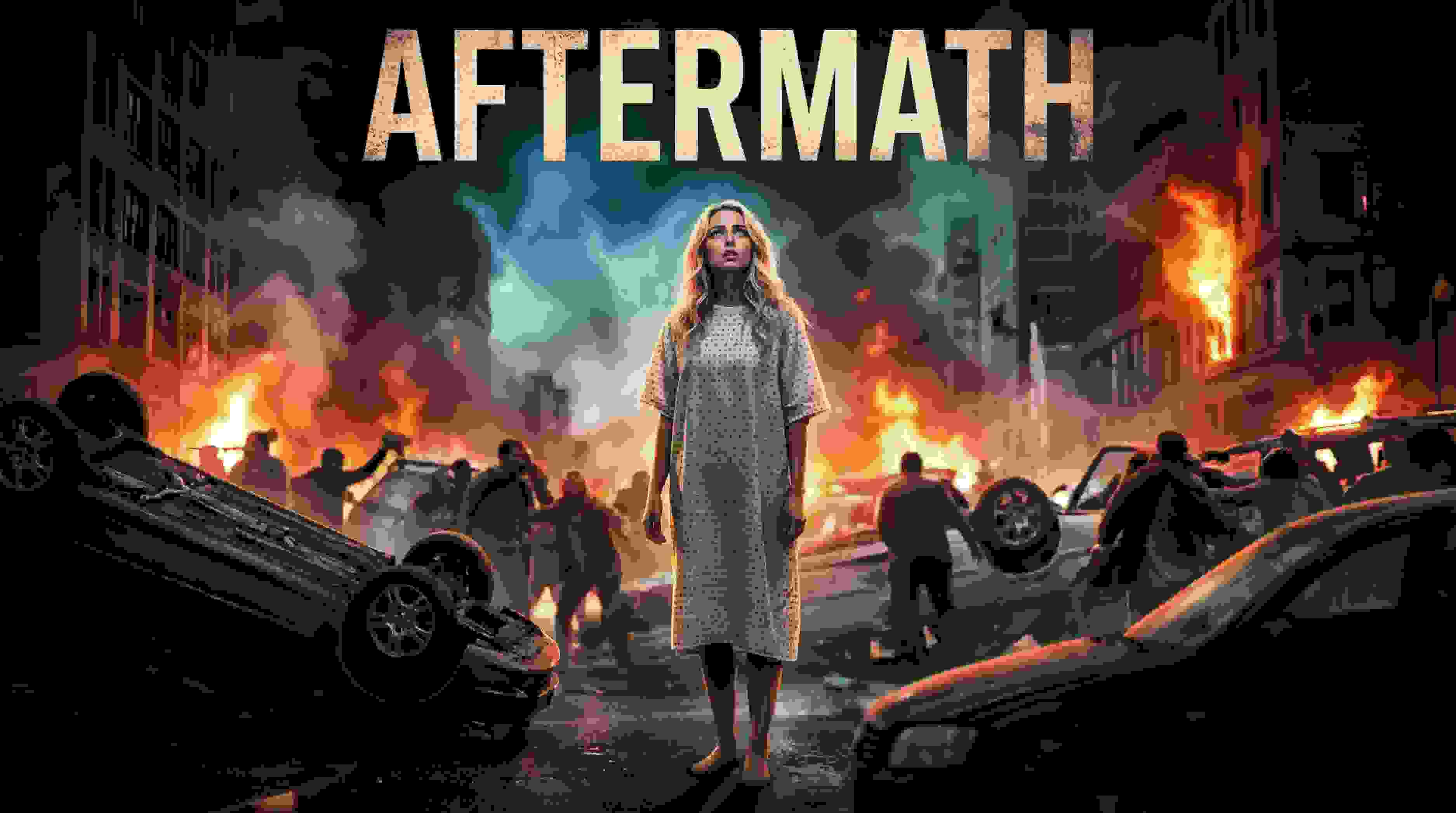

1. Marketing and Brand Spokesperson Videos

Brands are increasingly utilizing kling 2.6 motion control systems in order to create high-end corporate presenters or virtual ambassadors. By recording one employee’s performance and then moving it onto a stylized character or 3D mascot, it is possible for them to create hundreds of social ads or promotional videos for launching new products with perfect eye contact, body language, or tone of voice.

This workflow can be used in order to create “localized” ad campaigns where the body language of one presenter is used with different character designs for different regions or markets.

2. E-Learning and Educational Instructional Clips

Kling 2.6 is used by educators to create captivating video content by bringing existing knowledge to life, presented in a video format, complete with narration. The kling 2.6 model ensures that the authority of an instructor’s gestures, facial expressions, is maintained, by transferring them to virtual characters that can present content in various languages, lip-syncing perfectly. This helps an e-learning platform grow its content library without requiring a production team, a studio, or equipment to create a video.

3. Game Development and Cinematic Pre-Visualization

In the field of game development, kling 2.6 is used to animate avatars and prototype movements involving NPCs, or non-player characters. The user uploads a reference image of a character from a concept artist and a reference video showing a human character to animate realistic walking, fighting, or other actions to be used as a storyboard or as part of a final "B-roll" sequence. This helps speed up the development process, enabling smaller development houses to have a "AAA" cinematic look without the cost and complexity of a dedicated motion capture studio.

Get free access to kling 2.6

No Credit Card Required

1. Decoupling Performance from Appearance

The main advantage of Kling 2.6 is the capacity for isolating the human performance from the visual element. With this, companies can create infinite characters or brand mascots by mapping a high-quality reference for the human performance. This is called "motion control" and allows for a "record once, deploy everywhere" strategy.

2. Massive Reduction in Production Overhead

Transitioning to AI-powered video technologies means that businesses can look forward to a drastic reduction in traditional video filming expenses. This means that businesses can look forward to a saving of 60% to 70% on content creation expenses, coupled with a reduction of up to 80% in video content creation time.

Accessible and Scalable Pricing

With rates ranging between $0.07 and $0.14 per second on sites such as Fal.ai, Kling 2.6 functions as a 'workhorse utility.' It also removes 'bill shock,' common in enterprise offerings, as well as the barrier to entry that freelancers face, as it doesn't require high-end local GPus or rigging.

1. Quality Dependency on Reference Material

The result can only be as good as the input. In other words, when the initial motion reference is poorly lit or contains "occluded limbs" or limbs hidden behind the body, it would also be reflected in the AI-generated result, making it of poor quality. In most cases, there would be unnecessary credit consumption as users are forced to retry several times to obtain a clean result.

2. Compositional and Character Limits

The current technical limitations restrict Kling 2.6 from handling only one primary character in a generation. Such a restriction poses a problem when it comes to depicting realistic interactions with multiple persons or depicting large groups in a scene, particularly when it comes to narrative or advertising scenarios with multiple characters.

3. Temporal Instability and Resource Intensity

Although short clips, such as 5 to 10-second clips, can be of high quality, "background wobble" or "morphing objects" can occur in longer clips. The high frame rate of 30 FPS is also a premium feature, where "Pro" generations use significantly more processing power and credits than "standard" versions, which can affect the cost-efficiency of such a feature, especially in long-form content.

Q1: Is Kling 2.6 free to use?

A1: While Kling 2.6 is primarily a premium model requiring subscriptions on official channels, you can access APOB AI to explore its capabilities. APOB AI often provides trial credits or accessible entry points, allowing users to test Kling 2.6 motion control features without significant upfront investment.

Q2: How does Kling 2.6 handle multiple characters?

A2: The model supports multi-character scenes, but the motion control module is currently optimized for a single dominant subject. For complex interactions, APOB AI recommends focusing on one primary actor to avoid "joint breaking" or overlapping artifacts that occur when the AI confuses multiple skeletal structures.

Q3: Can I use Kling 2.6 for commercial projects?

A3: Yes. Most providers, including APOB AI, grant commercial usage rights for content generated under paid tiers. This makes it an ideal tool for creators looking to monetize high-quality AI animations for marketing or brand storytelling.

Q4: What is the maximum resolution of Kling 2.6 videos?

A4: It natively generates high-definition 1080p at 30 FPS. To achieve cinematic 4K quality, APOB AI users often combine the output with integrated upscaling tools to ensure the final render meets professional standards.

Q5: Why does my character’s face sometimes change mid-video?

A5: This is the "Morphing Object Problem." To maintain facial consistency, the APOB AI guide suggests using high-contrast reference images and including prompt anchors like "consistent facial features." This ensures the model prioritizes identity along with motion.

Q6: Does Kling 2.6 support languages other than English and Chinese?

A6: It natively supports English and Mandarin. For other languages, APOB AI suggests a workaround: upload your localized audio track and use the built-in lip-sync feature to match the character's mouth movements to any language.

Q7: What video formats are supported for motion reference?

A7: Standard formats like .mp4, .mov, and .webm are supported. For the best results on APOB AI, ensure your reference video has a clear silhouette and minimal background clutter to allow for precise skeletal extraction.

Q8: How long does it take to generate a clip?

A8: A 5-to-10 second clip typically takes 3 to 5 minutes. Utilizing the stable infrastructure at APOB AI ensures consistent rendering speeds even during peak usage hours.

Q9: Can Kling 2.6 animate non-human characters?

A9: Absolutely. As long as the character (anime, 3D, or animal) has a human-like skeletal structure, APOB AI can successfully map human motion onto it, opening up massive possibilities for virtual influencers and mascots.

Q10: Is there a "free" way to access Kling 2.6 for testing?

Yes, you can visit the APOB AI Kling 2.6 guide to learn how to access the model and utilize their specific tools to get started with trial resources.